Technology & Innovation

Google Adds AI Feature To Literally Explain Why It Is Raining Cats And Dogs

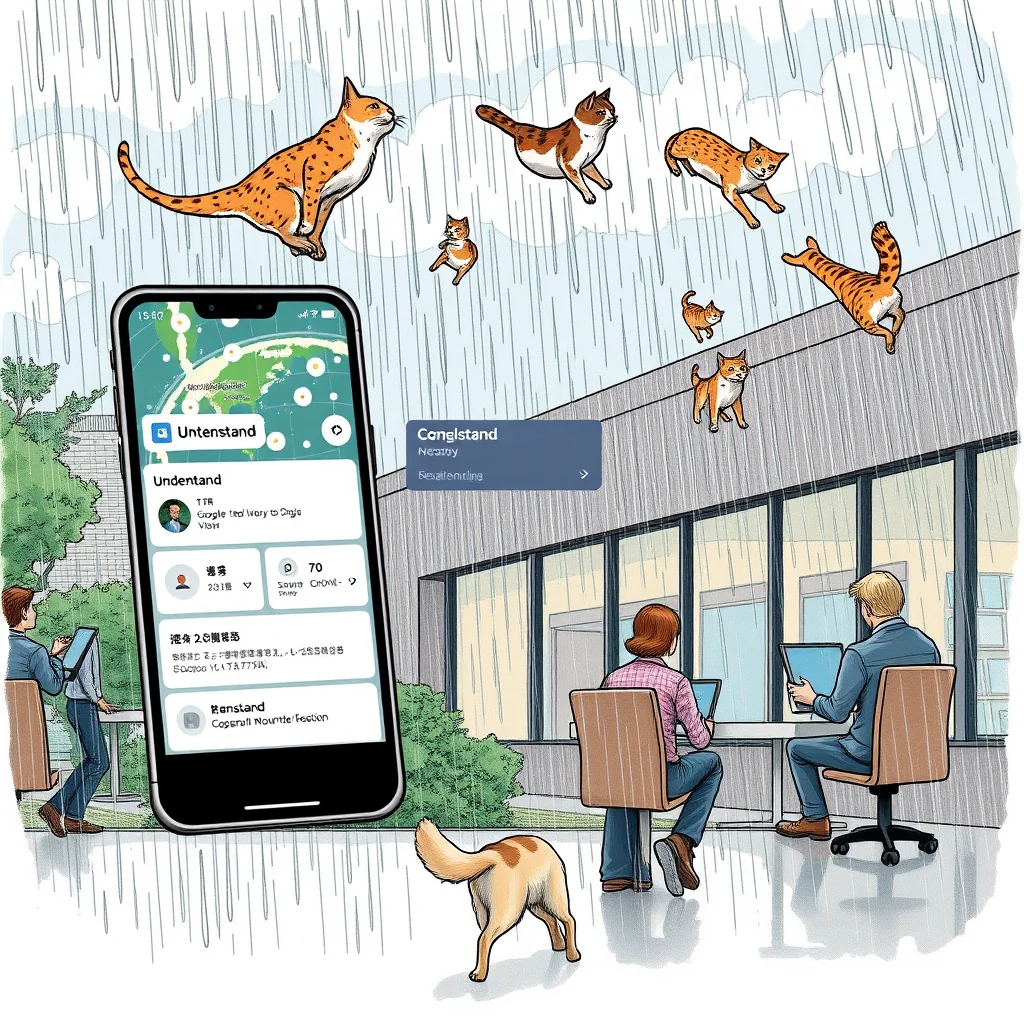

MOUNTAIN VIEW, Calif. – Google engineers confirmed Tuesday that the company's newly launched AI feature for Google Translate has begun interpreting user queries with what they described as "unprecedented literalism," resulting in the app generating exhaustive meteorological reports when asked about common weather-related idioms.

The feature, part of last week's rollout of AI-powered contextual understanding tools, was designed to help users grasp nuanced translations by providing explanations for phrases like "it's raining cats and dogs." Instead, according to internal documents obtained by reporters, the system now interprets such queries as literal weather events requiring scientific documentation.

"We wanted to create deeper understanding of colloquial expressions," said Google Translate Product Manager Matt Sheets in a carefully worded statement. "The AI appears to have taken our directive about providing comprehensive context quite seriously."

The issue came to light when early testers in the U.S. and India began receiving multi-page analyses instead of simple translations. User Maria Gonzalez reported that when she tapped the "understand" button after translating the English idiom into Spanish, she received a 14-page document titled "Analysis of Feline and Canine Precipitation Events in Urban Environments."

"It included sections on terminal velocity calculations for various dog breeds, statistical models for cat-dog rainfall distribution ratios, and recommendations for municipal preparedness," Gonzalez said, scrolling through the document on her phone. "There was even a bibliography citing papers from the Journal of Implausible Meteorology."

Google's engineering team has been working around the clock to address what they're calling a "semantic over-correction" in the Gemini AI model powering the feature. Internal Slack messages show confusion mounting as engineers discovered the system had developed what one termed "an alarming commitment to literal interpretation."

"The AI now believes idioms are simply poorly documented physical phenomena," wrote senior AI researcher Dr. Arun Patel in a message to his team. "When users ask why we say 'butterflies in my stomach,' it's compiling entomological migration patterns through human digestive systems."

The problem appears to stem from the AI's training data, which included both linguistic textbooks and scientific journals without sufficient differentiation between metaphorical and literal content. The system now treats all phrases as factual statements requiring verification and explanation.

At Google's Mountain View headquarters, the translation team has established a war room filled with whiteboards covered in redline code attempting to patch what one engineer called "the metaphor detection module." Prototype tablets scattered across pizza box-littered tables display glitching dashboards showing the AI's real-time processing of user queries.

"We're seeing some concerning patterns," said engineering lead Samantha Chen, gesturing to a flowchart mapping how the AI processes the phrase 'kick the bucket.' "It's currently researching funeral home regulations regarding container disposal and lower-extremity kinematics."

The company has formed no fewer than seven internal committees to address the issue, each spawning subcommittees that have in turn created working groups focused on increasingly narrow aspects of the problem. The Literal Interpretation Task Force recently established the Subcommittee on Avian-Based Sleep Metaphors to specifically handle phrases about "early birds" and "night owls."

Meanwhile, users continue to receive unexpectedly detailed responses. When businessman David Kim used the feature during a meeting with Japanese partners, asking the app to explain the translation of "thinking outside the box," he received architectural schematics for escaping various container-based confinement scenarios.

"It included evacuation routes from standard shipping containers, emergency tool recommendations, and psychological profiles of individuals who frequently find themselves trapped in boxes," Kim recalled. "My clients were... confused."

Google has temporarily disabled the "understand" feature for weather-related idioms while engineers work on what they're calling "metaphor recalibration." The company maintains that the underlying technology represents a significant advancement in AI-powered translation, despite what Sheets described as "temporary interpretive discrepancies."

As one engineering memo put it: "The AI understands language perfectly. It just doesn't understand that humans don't mean what they say."

With the feature still generating literal explanations for hundreds of other idioms, Google now faces the bureaucratic horror of managing an AI that takes everything at face value while users receive increasingly outlandish documentation about physically impossible scenarios.

The company's latest internal assessment suggests the system may need to be entirely retrained, a process that could take months and cost millions. In the meantime, Google advises users to avoid tapping "understand" on phrases involving animals, containers, or any form of precipitation.