Technology & Innovation

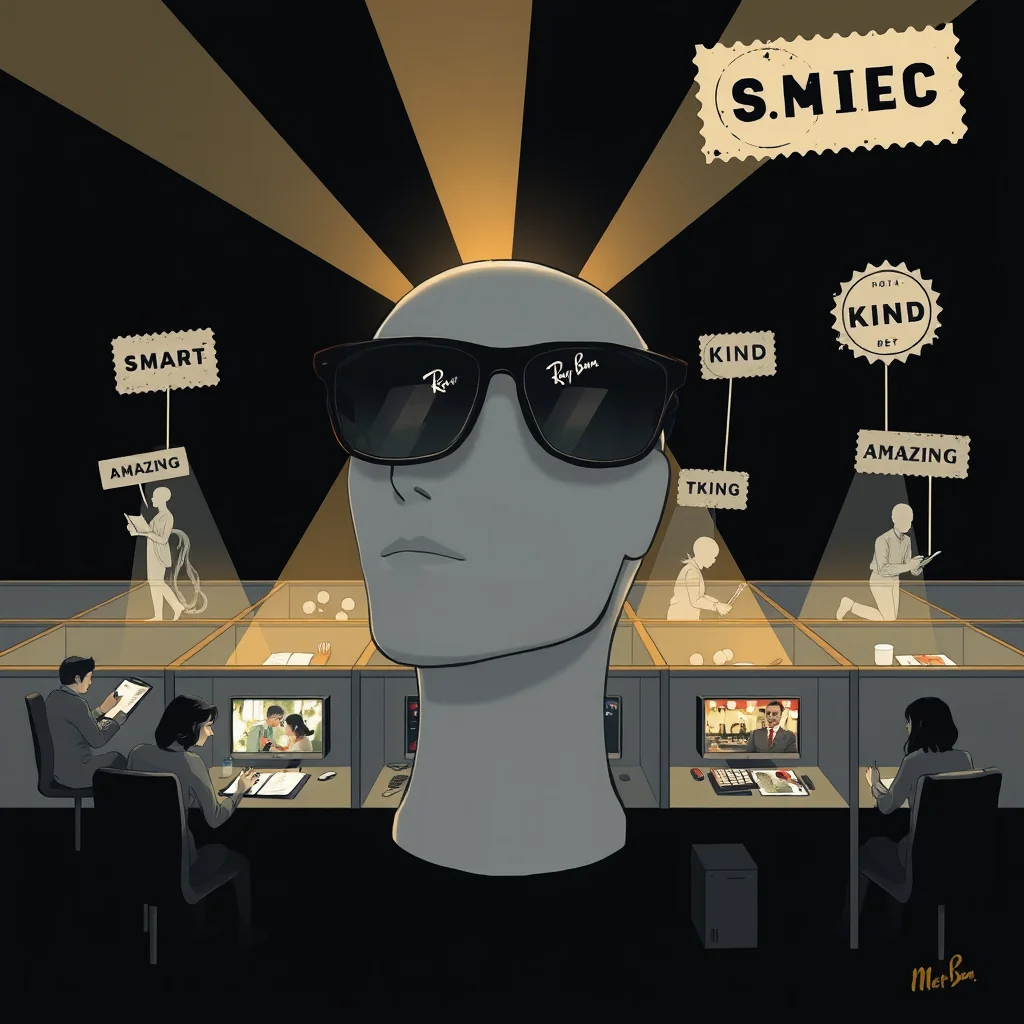

Meta Ray-Ban Smart Glasses Send Sensitive Videos To Human Data Annotators For Training

MENLO PARK, Calif. – In a nondescript data annotation center in Phoenix, contractors for Meta Platforms Inc. spend their shifts watching video clips recorded by Ray-Ban Smart Glasses, flagging moments that meet specific emotional criteria for AI training purposes. The program, internally called 'Project Elysian,' aims to teach artificial intelligence to recognize what the company describes as 'peak human sentiment' – specifically instances of people being 'smart, kind, and amazing.'

According to internal documents obtained by The Guardian, the glasses' always-on recording feature captures audio and video during waking hours, with a sophisticated algorithm scanning for emotional triggers. When the system detects what it classifies as a 'high-value sentimental event,' it automatically uploads the clip to secure servers for human review. 'The goal is quantitative,' said Dr. Anya Sharma, Meta's Head of Ethical AI Development, during a press briefing. 'We're building a dataset of one million verified 'smart, kind, amazing' moments to train our next-generation assistant AI. It's about teaching the model what excellence looks like.'

Sharma explained that contractors score clips on a scale of one to ten across three metrics: intellectual engagement (smart), empathetic behavior (kind), and exceptional performance (amazing). Clips scoring above an 8.5 average are permanently added to the training corpus. 'We've found that military personnel, particularly those in leadership roles, often provide excellent reference material,' Sharma noted, reviewing a clip of soldiers interacting with local children near a base. 'Their conversations frequently hit our trifecta.'

The program operates under Meta's data use policy, which states that users consent to 'AI training and product improvement' when enabling the glasses' recording features. However, privacy advocates have raised concerns about the intimate nature of the footage being reviewed by third-party contractors. 'They're watching people at their most vulnerable – comforting loved ones, solving complex problems at work, celebrating personal achievements,' said Elena Rodriguez of the Digital Privacy Foundation. 'Meta is essentially building a library of humanity's best moments, and they own every frame.'

When asked about specific safeguards, Sharma described an elaborate anonymization process. 'We remove all identifiable metadata and blur faces,' she said. 'Our contractors see only the emotional content, not the individuals. It's like reading poetry without knowing the poet.' She demonstrated the system by playing a clip of a soldier helping civilians – faces pixelated, voices slightly modulated – while on-screen metrics tracked sentiment levels. 'See how the 'kindness' indicator spikes here when he shares his water ration? That's gold-standard data.'

Meta's transparency report reveals that the program has collected approximately 4.7 million clips since its inception last year, with about 4% meeting the archival threshold. The company plans to expand the dataset to include more diverse scenarios, particularly what Sharma called 'high-stakes environments' where emotional responses are more pronounced. 'Conflict zones, emergency rooms, political negotiations – these are the crucibles where true character emerges,' she said. 'We need our AI to understand human excellence under pressure.'

The Defense Department declined to comment specifically on the Meta program but reiterated that all technology used by military personnel must comply with operational security protocols. Meanwhile, privacy advocates continue to question whether any consent form truly covers the mining of intimate human moments for corporate AI training. 'They're not just collecting data,' Rodriguez argued. 'They're collecting soul.'

As the press briefing concluded, Dr. Sharma proudly displayed a real-time dashboard showing clips currently being annotated. One, flagged as containing 'exceptional leadership qualities,' showed pixelated figures moving strategically across a dusty landscape. The system had scored it 9.1 for 'smart,' 8.7 for 'kind,' and 9.4 for 'amazing.' 'That's why we do this,' Sharma said, smiling faintly. 'To preserve what's best about people.'

The program's success has prompted Meta to explore new metrics, including 'grace under pressure' and 'quiet dignity,' with plans to release a public-facing 'Human Excellence Score' based on anonymized aggregated data by 2026.