Technology Policy

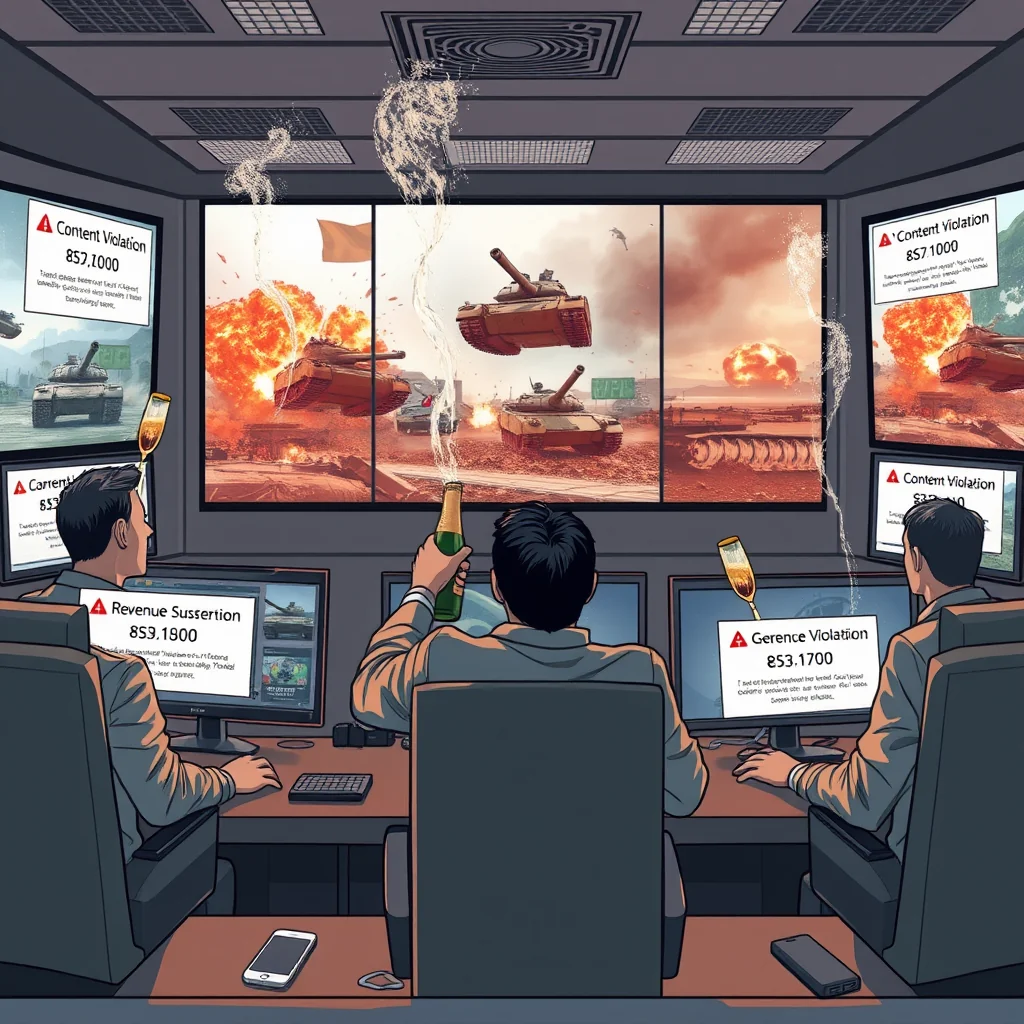

X Announces Users Will Be Banned From Earning Revenue If They Post Unlabelled AI-Generated War Videos

In a move that X executives described as "essential for maintaining platform integrity," the social media company announced Tuesday that users who repeatedly post unlabeled AI-generated war videos will face temporary revenue suspensions. The policy comes after what the company called "an unacceptable proliferation of synthetic conflict footage" following recent Middle East tensions.

"Our commitment to authentic user engagement requires clear boundaries around manufactured content," said X's head of monetization, David Chen, during a hastily arranged press briefing. "When users violate these boundaries, we must protect the ecosystem." Chen stood before a wall of monitors displaying real-time engagement metrics, occasionally tapping his tablet to highlight what he called "suspicious activity spikes."

The new policy imposes a 90-day revenue suspension for first-time offenders and permanent bans for repeat violations. Chen emphasized that the system would rely primarily on user reporting rather than automated detection. "Our community knows authenticity when they see it," he said, adjusting his glasses. "We trust our users to police themselves."

Internal documents obtained by The Guardian reveal the policy emerged from emergency meetings where executives debated how to address what one memo called "the Gavalas precedent" - a reference to the recent lawsuit against Google over its AI chatbot. "We cannot afford similar liability," the memo stated, "while maintaining our commitment to free speech."

The policy announcement coincided with rising energy prices linked to Middle East conflicts, creating what industry analysts called "a perfect storm of monetization challenges." RAC head of policy Simon Williams noted that fuel price increases were hitting household budgets just as digital revenue streams faced new restrictions. "It's a double squeeze," Williams said. "People are paying more at the pump while potentially losing income online."

X's implementation strategy relies heavily on what the company calls "three pillars of authentication": user reporting, algorithmic flagging, and manual review. However, internal communications show staffing for the manual review team has been cut by 40% this quarter. One remaining reviewer, speaking on condition of anonymity, described the process as "like finding needles in a haystack while management sets the hay on fire."

The policy has drawn criticism from digital rights advocates who question its practicality. "How exactly does X plan to distinguish between AI-generated content and, say, clever editing or stock footage?" asked Elena Rodriguez of the Digital Freedom Foundation. "This feels like performance art masquerading as policy."

Meanwhile, in North Carolina, the datacenter politics that have become a focal point in congressional races now intersect with the new content rules. Congresswoman Valerie Foushee, facing a tight primary race, issued a statement supporting "reasonable measures" while emphasizing local control over technology policy. Her opponent Nida Allam called the policy "a band-aid on a bullet wound" and renewed her call for a federal datacenter moratorium.

The announcement also comes as Wall Street indices show mixed reactions to Middle East developments. While the S&P 500 saw modest gains, the Dow Jones Industrial Average dipped slightly, reflecting what analysts called "uncertainty about content moderation's impact on social media valuations."

X employees described a chaotic rollout process. "We got the policy document thirty minutes before the public announcement," said one mid-level manager. "The compliance team is trying to build the plane while flying it, and we're all just hoping the wings don't fall off."

User reaction has been equally divided. Some creators applauded the move as necessary for combating misinformation. "I've seen those fake war videos, and they're dangerous," said longtime X creator Marcus Thorne. "But I'm not sure suspending revenue is the right approach. It feels like punishing the symptom instead of treating the disease."

Others noted the irony of a platform built on engagement metrics now penalizing content that generates significant traffic. "They're basically saying 'create viral content, but not too viral, and definitely not the wrong kind of viral,'" commented digital strategist Rebecca Lin. "It's like telling firefighters they can't use water because it's too effective."

As the policy takes effect, X faces what industry watchers call "the enforcement dilemma." With millions of posts uploaded daily and limited moderation resources, the company must balance its stated principles with practical realities. Chen remained optimistic. "We believe in our community's ability to self-regulate," he said. "This policy simply provides the necessary framework."

But behind the confident statements, internal metrics show a different story. According to documents reviewed by The Guardian, X's systems currently flag less than 3% of suspected AI-generated content, and the appeals process for wrongly suspended accounts takes an average of 47 days. One engineer privately described the situation as "trying to stop a tsunami with a spaghetti strainer."

The policy's ultimate test may come during the next international crisis, when users typically flood platforms with both authentic and synthetic content. For now, X insists it's prepared. "We've established clear guidelines," Chen said, tapping his tablet with renewed confidence. "The lines are drawn."

As energy prices continue their upward climb and digital content boundaries shift, users find themselves navigating an increasingly complex landscape where the rules seem to change faster than the content itself. The only certainty appears to be that in the battle between authenticity and engagement, someone's revenue stream will always be on the line.